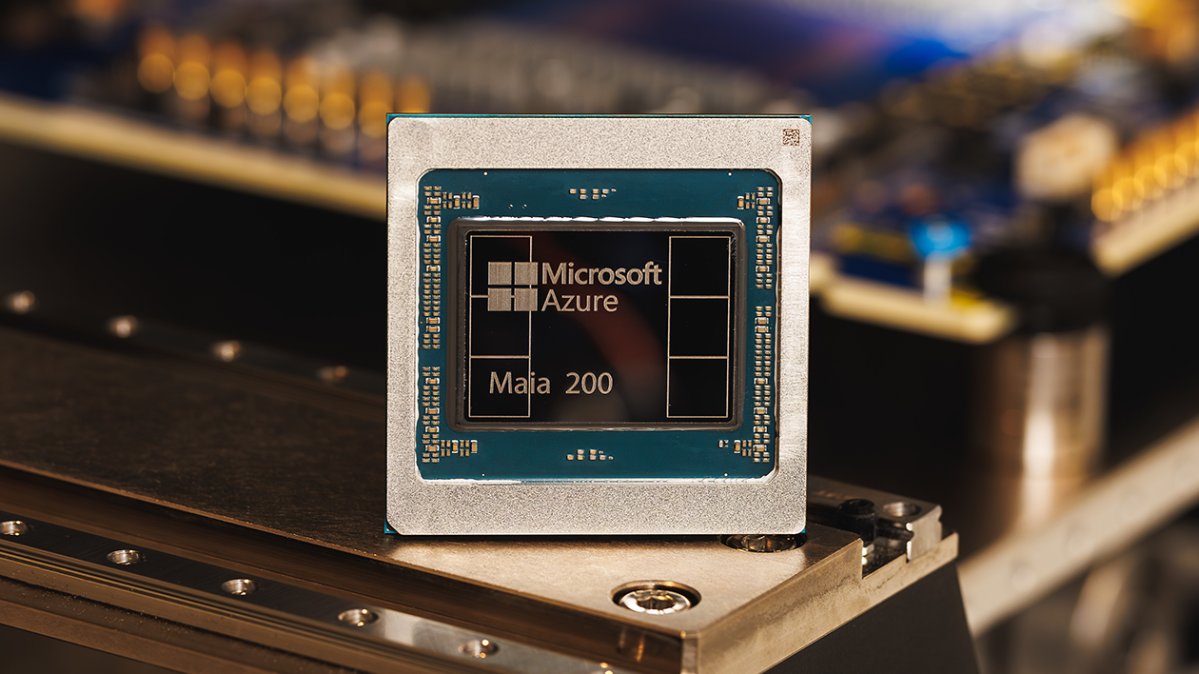

Microsoft has introduced the Maia 200 chip, designed to boost AI inference performance for businesses, offering efficiency and power savings.

Redmond: Microsoft has announced a new chip called Maia 200. This chip is designed to improve how businesses use AI by making it faster and more efficient. It has over 100 billion tiny parts called transistors.

The Maia 200 is much stronger than the earlier Maia 100 chip. It can handle more information at once and is better at using energy. This is important because running AI models involves lots of power and cost.

Running AI models is called inference. This is different from training the model. As AI grows, companies need to make running these models cheaper. Microsoft believes the Maia 200 can help businesses save energy and money.

One Maia 200 chip can manage today’s biggest AI models. It has extra power for even larger models later. Microsoft’s chip is part of a big trend where tech companies make their own chips to reduce reliance on Nvidia, a major chip company.

For example, Google has its TPU chips, which they provide through their cloud service. Amazon also has its own chip, called Trainium. These alternatives help cut costs for AI businesses.

With Maia, Microsoft wants to compete with these other options. They say Maia is three times faster than Amazon’s latest chip and better than Google’s TPU performance.

The Maia 200 is already working with Microsoft’s AI teams and is helping their Copilot chatbot. Microsoft has invited many different groups, like schools and developers, to try out the Maia 200 for their projects.